So I wish there was one consolidated doc of settings to change for ESXi 5.1 and EqualLogic. Anyone out there have more settings please let me know!!

To start from here http://en.community.dell.com/techcenter/storage/f/4466/t/19459676.aspx

1.) Delayed ACK DISABLED

2.) Large Receive Offload DISABLED

3.) Make sure they are using either VMware Round Robin (with IOs per path changed to 3), or preferably MEM 1.1.0.

Additionally

4.) Set Login_timeout to 15 or 30 seconds

I contacted EqualLogic support and they replied:

Solution Title HOWTO: Disabling TCP Delayed ACK may improve read performance with ESX 3.x/4.x/5.x software iSCSI initiator.Solution Details ESX allows you to disable the delayed ACK on your ESX host through a configuration option and may improve the read performance of storage attached to ESX software through the iSCSI initiator. PLEASE NOTE: this change will require a reboot of the ESX server to take effect.

Disabling Delayed Ack in ESX 4.0, 4.1, and 5.x

1. Log in to the vSphere Client and select the host.

2. Navigate to the Configuration tab.

3. Select Storage Adapters.

4. Select the iSCSI vmhba to be modified.

5. Click Properties.

6. Modify the delayed Ack setting using the option that best matches your site’s needs.

Choose one of the below options, I, II or II, then move on to step 7 after making the changes:

Option I:

Modify the delayed Ack setting on a discovery address (recommended).

A. On a discovery address, select the Dynamic Discovery tab.

B. Select the Server Address tab.

C. Click Settings.

D. Click Advanced.

7. In the Advanced Settings dialog box, scroll down to the delayed Ack setting.

8. Uncheck Inherit From parent. (Does not apply for Global modification of delayed Ack)

9. Uncheck DelayedAck.

10. Reboot the ESX host.

—

NOTE: From http://communities.vmware.com/message/2078917#2078917

One thing about Delayed ACK, is that you have to verify that the change took place. If you just change it, it appears that only new LUNs will inherit the value. (disable) I find that, while in maint mode, removing the discovery address and any discovered targets (in static discovery tab), then disabling Delayed ACK and re-add in Discovery Address and rescan resolves this.

At the ESX console run: #vmkiscsid –dump-db | grep Delayed All the entries should end with =’0′ for disabled. ( note that is a – – two dashes before the dump-db silly WP font)

I ran it and experienced the same, only some of the connections had the DelayedAck set correctly. So definitely remove the discovery address and static targets to fix.

—

To disable LRO from http://communities.vmware.com/thread/419234

|

HOWTO: Disable Large Receive Offload (LRO) in ESX v4/v5

|

|||

| Solution Details |

Within VMware, the following command will query the current LRO value.# esxcfg-advcfg -g /Net/TcpipDefLROEnabledTo set the LRO value to zero (disabled):# esxcfg-advcfg -s 0 /Net/TcpipDefLROEnabledNOTE: a server reboot is required.

Info on changing LRO in the Guest network. http://docwiki.cisco.com/wiki/Disable_LRO To disable LRO, follow this procedure:

Your guest VMs should now have normal TCP networking performance. |

||

—

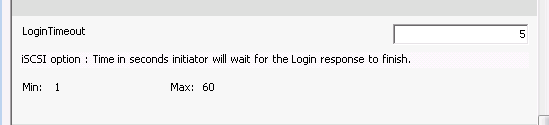

Set Login Time Out

This vmware 5.1 document points to this KB article http://kb.vmware.com/kb/2007829

- Go to Storage Adapters > iSCSI Software Adapter > Properties.

- Select Advanced and scroll down to LoginTimeout.

- Change the value from 5 seconds to a larger value, such as 15 or 30 seconds.

Addendum

—

On second review the Official Documentation for the EqualLogic MEM (Rev 1.2, which covers vSphere 5.1)

http://www.equallogic.com/WorkArea/DownloadAsset.aspx?id=11000

Advises:

Deployment Considerations: iSCSI Login Timeout on vSphere 5.1 and5.0

The default value of 5 seconds for iSCSI logins on vSphere 5.x is too short in some

circumstances. For example: In a large configuration where the number of iSCSI

sessions to the array is close to the limit of 1024 per pool. If a severe network

disruption were to occur, such as the loss of a network switch, a large number of iSCSI

sessions will need to be reestablished. With such a large number of logins occurring,

some logins will not be completely processed within the 5 second default timeout

period.

Dell therefore recommends applying patch ESXi500-201112001 and increasing the ESXi

5.0 iSCSI Login Timeout to 60 seconds to provide the maximum amount of time for

such large numbers of logins to occur.

If the patch is installed prior to installing the EqualLogic MEM, the MEM installer will

automatically set the iSCSI Login Timeout to the Dell recommended value of 60

seconds.

Automatically setup is nice in theory but doesn’t appear to work with ESX5.1 ??? I had to set mine to 60 manually

I was able to find these three recommendations under the support portal under here https://support.equallogic.com/support/solutions.aspx?id=1444

They are

HOWTO: Disable Large Receive Offload (LRO) in ESX v4/v5

HOWTO: Change Login timeout value ESXi v5.0 // Requires ESX patch ESXI500-201112001

Good info Michael.

Man. So many tweaks. This is the kind of stuff that always keeps me wondering if my config is running optimally or not. How did you know to make these changes? I’m trying to get through the ESXi 5.1 upgrade guide now. How is it not having the gui console on ESXi anymore? I’m not the best with esxcli or PowerCLI and have had to rely on that at times. On a side note, Dell discovered that I had a batch of bad controllers on my two PS6000s. They overnighted me new ones and gave me instructions how to replace. Testamant to Equallogic how easy it is. You make sure you are in a lower IOPs period or better yet vMotion your VMs off of the storage. (I didn’t have this luxury). You pull the idle controller and remove a miniSD 1Gb chip from the old controller and pop it in the replacement. Plug it back in and it takes about 2 minutes or less for it to sync with the active controller. Now here was the intimidating part. If I had not watched Dell reps do this numerous times I wouldn’t have been as willing. You pull the active controller and watch as it takes about 10 seconds to fail over to the idle one. I had Exchange and SQL running on these arrays and they didn’t skip a beat.

Replace the miniSD chip and replace the new controller. Worked like a charm.

Yes, there are a lot of settings. Yes, it is hard to get it running optimally 🙂 I wish that KB articles would get rolled into the dell install guides. I’m pretty sure about these settings mainly because of frequency in seeing them… not through any real testing (which would be nice if Dell would do for us) ESXi 5.1 has really been a boring upgrade. I thought it would be quite different but really meh, not so much. Yeah, its fun to pull the controller card, I have been torturing my new setup before it goes into production. Testing the fail over of the switches, controllers cards, lost links and HA etc…. sometimes all at the same time!

For ESX5i, you can leave the SSH server and ESXi console running all the time (enable them in the security profile), which will give you some of the old feeling of connecting directly to the server. (Obviously security is looser, so make sure you have other ways to restrict access). One alternative is to run the vMA appliance or the Vmware CLI from your desktop.

I also like to change the iSCSI Recovery Timeout to the maximum as I find often controller failovers take longer than 30seconds

esxcli iscsi adapter param set –adapter=vmhbaXX –key=RecoveryTimeout –value=120

You can set all the parameters through a console/SSH session. For all available options check the GUI or run

esxcli iscsi adapter param get –adapter=vmhbaXX. I find for many servers at once it’s quicker to paste in a load of commands than do lots of clicking.

Another thing to be aware of is you need a heartbeat vswitch under vmware 4.1 and 5.0 but according to the doc linked in the main article, you don’t under 5.1 due to the changes in the iSCSI subsystem.

Just doublechecking here, does the disable LRO settings on the guest network still apply to vSphere 5.1 aswell? I’m using powerconnect switches.

I could not get your vmkiscsid command to work to see if the ACK was still enabled.

I then found this command…

vmkiscsid –dump-db | grep Delayed

and it worked. Thanks for all of the tips!